Year 1981:- ” A computer on every desk” became a bold statement.

That time nobody knew about computers and the power it can unleash. But can you think of a world today without a computer? Probably not!

With computers becoming main stream in late 90’s, this space has shown enormous growth. Now devices not only exists on desks but in pockets and on clouds as well. With growing number and types of devices, the complexity to maintain existing ecosystem and incorporate new innovations is also becoming very challenging. Developers face a huge challenge to understand the platform and then write their code. With each new innovation the learning curve became steeper and steeper.

Grabbing this opportunity “Docker Inc.” (previously dotcloud) came to the rescue and made it a simpler world. They developed a platform which can run in exact same shape and form regardless of the device you choose. This concept immediately gained popularity amongst the developers and now it is undergoing a huge adoption phase. But before we move forward lets look at the history why we require technologies like Docker and what purpose they solve.

Good Old days:-

We know that business runs on applications and that time building one application used to take anytime between days to months and that too without any assurance from IT / Developers that application will run smoothly. Hence to remove any ambiguity, IT used to buy beefy machines to host applications. But this involved large amount of planning, huge upfront costs, wild assumption of application consumers and time to setup the server. This was a tedious process and each application had its own server to avoid physical resource and port contention. Very soon companies started to fill up the physical space and bared huge cost on service, maintenance, electricity, cooling and people.

There was an immense opportunity to fill up this gap and VMWare stepped in with their virtualization technology.

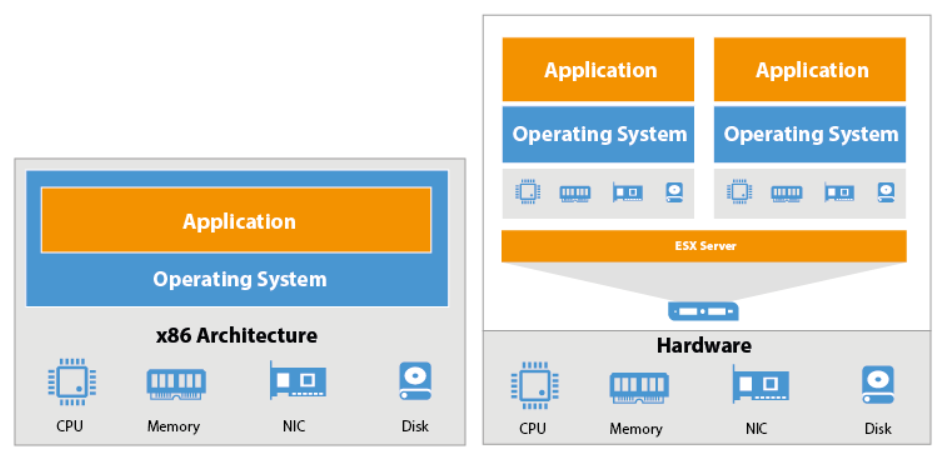

With virtualization jumping in, business immediately started getting ROI for the amount they were investing in IT. Now the same server which was hosting only a single app immediately started hosting multiple applications. With this functionality in place the beefy hardware which IT procured started getting utilized in an optimum manner and the spare capacity (from the existing investments) started serving High Availability and Fault Tolerance scenario’s for the applications. Now what is the difference between a physical machine and a virtual machine?

| Physical Servers | Virtual Machines |

| Large upfront costs | Small upfront costs |

| No need for licensing purchase | VM software licenses |

| Physical servers and additional equipment take a lot of space | A single physical server can host multiple VMs, thus saving space |

| Has a short life-cycle | Supports legacy applications |

| No on-demand scalability | On-demand scalability |

| Hardware upgrades are difficult to implement and can lead to considerable downtime | Hardware upgrades are easier to implement; the workload can be migrated to a backup site for the repair period to minimize downtime |

| Difficult to move or copy | Easy to move or copy |

| Poor capacity optimization | Advanced capacity optimization is enabled by load balancing |

| Doesn’t require any overhead layer | Some level of overhead is required for running VMs |

| Perfect for organizations running services and operations which require highly productive computing hardware for their implementation | Perfect for organizations running multiple operations or serving multiple users, which plan to extend their production environment in the future |

Virtualisation made life little easier of IT Ops as unplanned downtime reduced but still dependency on the Operating system was there. OS required individual licenses, hardware resources, storage space, runtimes, dependencies and regular updates. Apart from this, developers never had an assurity that their application will run in a same manner across all the available platforms. To bridge this gap containers started to gain popularity and Docker Inc was one of the major player who truly transported container across all available major platforms.

VM’s vs Containers

Now with containers, developers can fire up a docker container in their own machines running docker runtime and test the application functionality. Once the functionality is tested, there is always a sense of assurity that the application is always going to run in a same manner regardless of the hosting platform provided it has Docker runtime running. What is a difference between a container and a virtual machine?

| VMs | Containers |

| Heavyweight | Lightweight |

| Limited performance | Native performance |

| Each VM runs in its own OS | All containers share the host OS |

| Hardware-level virtualization | OS virtualization |

| Start-up time in minutes | Start-up time in milliseconds |

| Allocates required memory | Requires less memory space |

| Fully isolated and hence more secure | Process-level isolation, possibly less secure |

What are your thoughts? Will the innovation stop at containers or there is something new that is in pipeline ?

1 comment for “Compute Trends in IT – Legendshub”